Platform governance goes to court

PRESENTED BY

IT'S MONDAY, AND THIS IS DIGITAL POLITICS. I'm Mark Scott, and as the war in the Middle East enters its second month, here's a map that explains we are all in for major energy price hikes (or shortages) in April.

— American and European courts are doing more for social media oversight than the growing list of online safety regimes worldwide.

— Middle Powers are now testbeds for different forms of AI, competition and platform governance regulation. Some will work, others will not.

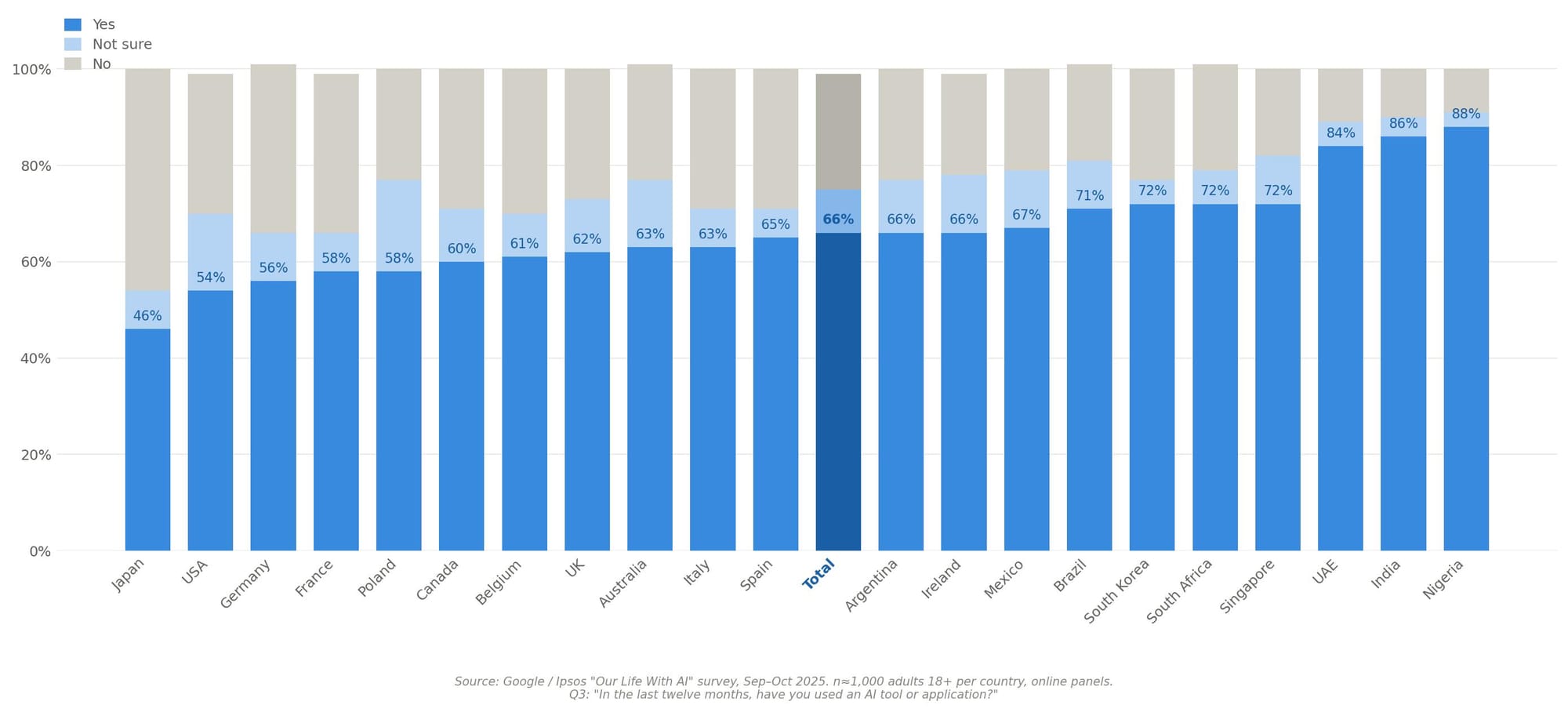

— Two-thirds of people polled worldwide say they have used AI, in some form, over the last 12 months.

Let's get started:

LITIGATION VERSUS LEGISLATION?

ONE OF THE HALLMARKS OF DIGITAL policymaking over the last five years is the drive toward national or regional online safety rules. The likes of Australia's Online Safety Act; the European Union's Digital Services Act; and the United Kingdom's Online Safety Act epitomize lawmakers' efforts to create greater accountability and transparency for social media giants. That, in turn, has led to a pushback from some of these companies and the United States (at least within the federal government), which criticized these rules as either being overly cumbersome or an illegitimate attack on people's free speech rights.

My day job means I'm pretty clued up on most of these (Western) online safety regimes. If you want a wonky policy discussion about mandatory data access requirements or the inner workings of companies' annual risk assessments and external audits, then I'm your man. Yet we need to be honest about the current state of play of these online safety regimes. They are often too cumbersome, under-resourced and overly-politicized to meaningfully improve people's experiences online — at least in the short term.

In contrast, four recent court decisions — two in the US, two in the EU — demonstrate how judges and juries now have had a more significant impact on platform governance compared to the growing number of national/regional online safety rulebooks. For countries similarly seeking to create greater transparency and oversight for the likes of TikTok and YouTube, this "litigation over legislation" strategy may be worth pursuing. That's especially true given how the current White House is embedding provisions to ward off future digital regulation in its trade negotiations with third-party countries.

**A message from Meta** Following the Brussels AI Symposium, hosted by Meta with eco and EssilorLuxottica as supporting partners, the message from leaders was clear: the world needs a strong Europe at the table in this ongoing technological revolution. Read eco’s white paper here.

Before we get to the court cases and their implications, let's lay out two caveats.

First, it's not a question of litigation or legislation. These policy levers do different things. For officials, it's more about potentially front-running lawsuits, based on existing statutory oversight, before more long-term online safety regulation can navigate countries' often labyrinthine democratic processes. Second, litigation often builds on existing regulatory playbooks, providing individual citizens the ability to fill in gaps where slow-moving — and often untested — legislation has yet to take hold.

OK, caveats covered. Now, to the cases. I'll keep these brief, given how much coverage there has been, especially related to the American lawsuits.

In a one-two punch, two US courts — one in California, another in New Mexico, respectively — took swings at Meta and Google and, separately, Meta. On March 25, a jury in Los Angeles awarded $6 million in damages to a plaintiff who had accused YouTube and Instagram of deliberate design choices that had made her become addicted to both platforms. In response, both Meta and Google rejected those assertions and said they would appeal.

If you've been forwarded this newsletter (and like what you've read), please sign up here. For those already subscribed, reach out on digitalpolitics@protonmail.com

In New Mexico, a separate jury on March 24 found that Meta had violated state consumer protection laws and ordered the tech giant to pay $375 million in damages. The case revolved around accusations from the state's attorney general that the social media company's services were designed to maximize engagement for children without embedding the appropriate safety measures to protect minors. In response, Meta said it kept people safe on its platforms and would similarly appeal.

In Europe, a regional court in southern Germany upheld a complaint on March 11, initially filed by a local consumer protection agency. It required YouTube to stop online influencers from posting sponsored content if the underlying advertiser was not disclosed and clearly stated. The court decision is not yet final. But the preliminary ruling may force YouTube to place a "sponsored post" label across all such videos, as well as require content creators to make public who is paying for such ads. It's a clarification to the EU's Digital Services Act (Article 26) related to how online platforms handle transparency issues related to online advertising.

Finally, a Dutch court on March 26 forced Elon Musk's xAI to stop generating and distributing sexually-explicit images of people without their consent in the Netherlands — or face daily fines of around $115,000. The case had been brought by Offlimits, a local advocacy group. It followed global outrage — and regulatory investigations — into how xAI's Grok artificial intelligence tool and X, which hosted it, had been used to create realistic deepfake explicit images of women and children. xAI's lawyers had said it was impossible to remove all such abuse from the social media platform. The company also stopped Grok from creating such images in early 2026, though the Dutch judge believed there was still reasonable doubt that xAI's attempts would be effective.

Four legal cases, four slightly different legal issues. More lawsuits are pending, and the current cases may still be overturned on appeal.

Yet what is striking are the similarities between these lawsuits — and what they do compared to slow-moving online safety regulation.

Two central criticisms aimed at legislation which target social media are that 1) These platforms have significant liability carve-outs for what people post online and 2) The likes of Australia and the UK's Online Safety Acts represent illegal attacks on free speech rights. The four separate cases, outlined above, mostly circumvent these issues by focusing on the design of these platforms, not on how they moderate individual social media posts.

This is a significant distinction and one, to be fair, also baked into national/regional online safety rules. The point of these lawsuits was not to dictate what could be posted online. Instead, they took aim at the intrinsic design choices that the likes of xAI, YouTube and Instagram had made that, at least in the views of the American juries and European judges, failed to live up to these companies' obligations under existing legislation. It's hard to accuse these decisions of undermining free speech rights when they focus exclusively on the wonkiness of how content recommender systems operate or the transparency requirements related to influencers' sponsored posts.

The Censorship Industrial Complex, it is not.

The second meaningful difference between these lawsuits and the ongoing conveyor belt of online safety regulation is how much more personal such litigation makes the potential harms associated with social media.

I can count, on one hand, the number of experts who have read the EU's most recent risk assessments and external audits related to how so-called Very Large Online Platforms and Search Engines combat alleged systemic risks under the bloc's Digital Services Act. I joke. But only just. These documents run into the hundreds of pages; are inherently legalistic in both tone and nature; and — after two years of these reports being published — have not provided meaningful transparency for the average EU citizen.

In contrast, the often personal (and routinely tragic) stories at the heart of such lawsuits, as well as the spectacle of high-profile tech executives taking the stand to defend their platforms, cuts through to the average social media user more effectively than decades worth of dense policy documents. They demonstrate the potential real world harms resulting from poor design choices that is just not possible via online safety regulation which, inherently, takes a systemic view of such problems.

Inherently, platform governance litigation does something different than online safety legislation. It is not one over the other. But at a time when regulatory headwinds are gathering against countries' attempts to pass such regulation, a shift toward national courts — as a means to boost transparency and accountability for some of the world's largest companies by centering these debates in the lived experiences of individuals — is a much-needed step.

Chart of the day

THE US LIKES TO THINK IT'S THE CENTER of the AI revolution. And at least when it comes to where these systems are built, that certainly is true.

But Americans remain behind the curve in the use of artificial intelligence tools and applications compared to their peers across both the West and the Global Majority, based on a worldwide survey conducted between Sept - Oct, 2025.

On average, 66 percent of those polled said they had used such services over the last 12 months. At 88 percent, Nigeria was the most AI-savvy country compared with Japan where only 45 percent of people said they had used AI over the last year.

MIDDLE POWERS: LABORATORIES OF DIGITAL POLICYMAKING

IN THE EARLY 1930s, THE US SUPREME COURT justice Louis Brandeis referred to US states as so-called "laboratories of democracy." By this, he meant smaller jurisdictions, with the Union, could try different social and economic models without these experiments unduly harming all 50 states. I can't help but think of that expression as I look over the similar digital policymaking crucible underway in so-called Middle Powers countries, or states like Brazil, Japan and the UK that sit slightly outside the trifecta of global digital policymaking powers of the US, EU and China, respectively.

Taken together, three ongoing experiments in each of these jurisdictions demonstrate how national lawmakers and officials are meeting local needs in an increasingly globalized digital world. They offer potential alternatives for other Middle Power countries that do not necessarily want to be rule-takers from global powers when it comes to digital competition, artificial intelligence and data protection.

First, to London. I remain skeptical the UK government (of all political flavors) has the will to implement a serious digital policymaking agenda. Other, that is, than one that prioritizes foreign direct investment over all other demands. Yet the country's Competition and Markets Authority (CMA) is slowly implementing the so-called the Digital Markets, Competition and Consumers, or updated digital antitrust rulebook, that offers an alternative to the more bureaucratic approach under the EU's Digital Markets Act.

A quick snapshot about how these competition rules operate. Under the UK's regime, regulators first determine if a company has so-called "Strategic Market Status," and then create specific rules to ensure its dominance doesn't skew the market. Under the EU's rulebook, companies are designated as "gatekeepers," and then — collectively — European regulators determine if these firms' activities infringe on smaller players.

Before I get angry emails, yes, Doug Gurr — a former senior Amazon executive – was appointed as chairman of the British competition agency in February. That has raised concerns the CMA will pull back on its digital enforcement work. But since early 2026, the regulator has issued two statements — one linked to how people/business interact with Google's search product; another aimed at leveling the playing field in both Google and Apple's App Stores — that are worth tracking.

Both are designed to loosen these services' control of what are now viewed as dominant parts of the online economy. Critics will say they don't go far enough to hobble these services. But the UK's revamped digital antitrust regime is designed to create bespoke interventions, based on individual companies' services, that may prove more nimble than the one-size-fit-all approach outlined within the EU's Digital Markets Act.

What's worth paying attention to, for other Middle Powers, is whether the proposed changes in both Google search and the app stores gives greater breathing space for competitors, as well as allowing users to more easily swap to rival search products. If that does start to happen in the UK (and it's still an 'if,') then London's digital antitrust approach may be worth adopting.

Shifting gears — both geographically and thematically — takes us to Japan where the country's AI regulation is now more than six months old. Unlike the top-down legislation outlined by the likes of South Korea and Europe, Japan has instead implemented mostly voluntary guidelines, backed up with expanded enforcement powers for existing regulatory agencies, to create a flexible approach to AI oversight. At least, that is what Tokyo would like you to believe.

**A message from Meta** On 24 March, The Brussels AI Symposium hosted by Meta with eco, and EssilorLuxottica as supporting partners, convened political leaders including European Parliament President Roberta Metsola and US Ambassador Andrew Puzder, Italian Vice Minister Valentino Valentini, and leaders across industry. The speakers struck the same chord: the world needs a strong Europe at the table in this ongoing technological revolution.

Regulatory simplification to enable innovation and competitiveness is a necessity. Implementation must match the ambition. To learn more, read eco’s white paper here.

The legislation also includes a public commitment to invest $6.3 billion, over five years, in AI-linked emerging technology, as well as other high-tech industries like drones and quantum computing. The idea is to combine a co-regulatory approach to AI governance — again, supported by stricter enforcement from the likes of the country's privacy regulator — with direct investment in local firms competing on the globe stage.

Japan's approach stands somewhere between that of the 'regulate-first' EU and the 'don't-regulate' US, albeit via an AI governance framework that relies heavily on voluntary corporate compliance. Still, it may represent a potential third way for other countries both concerned about how AI is rolled out nationally and wanting to support local industries in this global technology race.

Finally, to Brazil. Latin America's most populous country enacted its so-called ECA Digital law earlier this month, specifically aimed at protecting children online. The regulation gained widespread traction after local YouTube channels were found to be profiting off sexualized videos of children.

Key provisions include: age verification requirements for platforms that host potentially inappropriate content for minors; such age-gating must take place when an account is created; providers of digital services must prevent addictive design practices like infinite social media scrolling; companies must remove child-related criminal content and notify authorities; a new law enforcement center was created to coordinate potential violations.

What's different from other online safety regimes is that it puts a significant onus on companies, not the government, to enforce individual provisions. That will inevitably create higher regulatory burdens for companies operating in Brazil — and some firms will likely pull out because of that.

But for other countries, which don't have the financial resources to implement a UK-style Online Safety Act, the outsourcing of such requirements to digital services where much of the potential harm is housed may offer a way forward in adopting online safety regulation without similarly incurring hefty increases in public money to support such oversight.

What I'm reading

— The Reuters Institute at the University of Oxford delve into the online media/news habits of Generation Alpha. It's more social media, less websites. More here.

— The DSA Observatory explains what lessons it learned when its application for data access to privately-held social media data was rejected under the EU's Digital Services Act. More here.

— The Oversight Board published recommendations for how Meta should implement its community notes instrument across its global platforms. More here.

— New York University's Center on Tech Policy produced a comparison of the 60 bills, across nearly 30 US states, aimed at regulating companion AI chatbots. More here.

— Almost 70 countries within the World Trade Organization agreed to an interim pathway toward global rules for digital trade, even though a final deal is unlikely in the short term. More here.

Member discussion