You say child safety, I say data protection

WELCOME TO THE FREE MONTHLY EDITION of Digital Politics. I'm Mark Scott, and many of you were likely traveling to RightsCon this week in Zambia. It looks like that global digital rights conference was canceled due to pressure from China.

I don't typically promote my day job. But this report outlining how the European Union's next seven-year budget can support digital democratic resilience has taken up a lot of my last six months. Enjoy.

— Protection for children online runs counter to long-standing fundamental privacy rights. It's time to acknowledge those opposing forces in digital policymaking.

— AI-enabled deepfakes are flooding the US mid-term elections. But it's the use of large language models to track voter behavior that is the real concern.

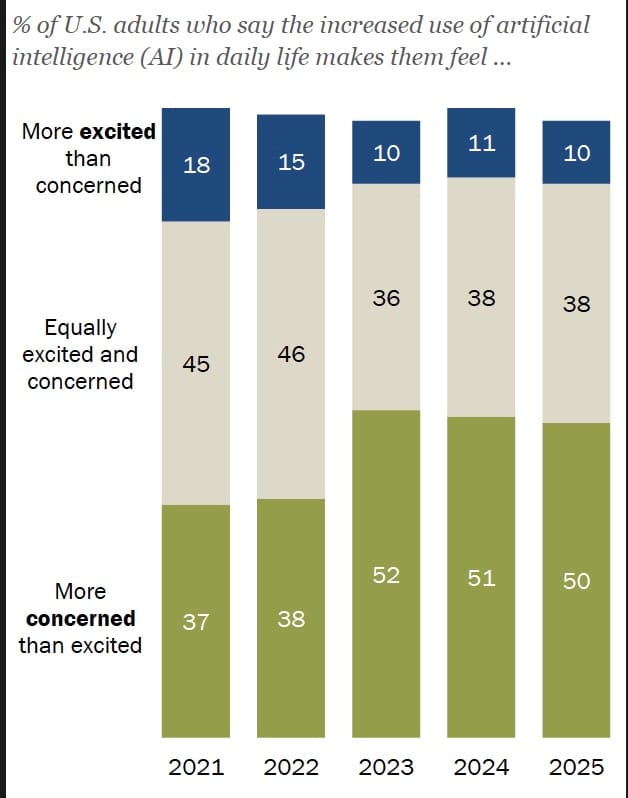

— Half of Americans polled remain wary of how artificial intelligence is going to affect daily life.

Let's get started:

WHEN TWO DIGITAL POLICYMAKING TOPICS COLLIDE

IF TECH POLICY WAS THE MOVIE ZOOLANDER, then online child safety would be "so hot right now." Age verification required for app stores and digital services abound. Everyone under the sun wants an Australia-style child social media ban. Regulators from the EU, United Kingdom and United States are falling over themselves to announce child-focused investigations or inquiries.

Yet these efforts — often based on legitimate concerns about how minors can be protected in the digital world — run counter to long-term data protection rules aimed at giving us all greater control over our online data. In many cases, privacy and child safety policymaking are pulling in the opposite directions. Often, officials who drafted a country's data protection oversights are not in contact with those pursuing child online safety goals.

It's creating a digital policymaking mess that 1) does not end up protecting kids online; 2) fundamentally erodes digital privacy rights at a time of mass data collection and surveillance; and 3) leads to regulatory confusion that puts individuals at risk (at the same time) of both being surveilled more and made less safe online.

In short, the current status-quo is not working. Let's break down the three tensions that remain unanswered.

Here's what paid subscribers read in April:

— Hungary's election shows the limits of misinformation/disinformation; Washington's digital trade policy is more joined up than you may think; How many Australian teenagers have been kick off social media? More here.

— The EU-US digital relationship is still on life support; What Ireland got wrong with its upcoming AI summit; Europe issued more than $1.3 billion in data protection fines in 2025. More here.

— How China, the EU and US are approaching the global AI race; Why Brussels is prioritizing kids and marketplaces under the Digital Services Act; Which US states are leading the way on digital legislation? More here.

— Europe and the US have two opposing takes on digital sovereignty; How the US FISA renewal fails to understand its global impact; AI models are getting "smarter" faster than ever before. More here.

A central component of many child safety regimes is age verification and assurance. These efforts often rely on third-party companies collecting people's sensitive data (ie: passport details, credit card information and other data points to confirm they are older than 18-years old) before they can access specific digital services. As countries enact age verification/assurance provisions, a cottage industry of these providers have sprouted up to give the likes of Meta, TikTok and Netflix the ability to meet these legal requirements to check people's ages.

Much of this data is inherently sensitive, under the definition of most countries' data protection rules. That distinction matters. It means that companies must collect the minimal amount of data required; that such information must be gathered for specific purposes (and not re-used for other efforts); and that, once that task is complete, the data should be deleted. That trifecta is the mainstay of Western privacy standards.

But the age verification/assurance requirements run directly counter to those principles. In many cases, companies hold onto this sensitive data — and even collect more of it than technically necessary — to cover their backs in case regulators come knocking to check for compliance. That is a totally defensible corporate move. But it represents a potential data protection violation, under most countries' rules, because it fails the "trifecta" principle, outlined above.

Case in point: Spain's data protection authority fined Yoti, a British age verification service, $1.1 million in March. That levy related to unlawfully processing people's biometric data; processing that data without the right consent; and holding onto that data for too long. Yoti rejected those findings. But it's ironic that this fine occurred in a country that is aggressively seeking to ban teenagers from social media — a pledge that will require hefty age verification to ensure compliance.

This contradiction plays into the second stumbling block that few policymakers want to acknowledge. The ability to continually check that minors are not using barred digital services inevitably requires the creation of extensive digital infrastructure. That involves the likes of third-party age verification services like Yoti (and others), as well as internal corporate systems within app stores and social media giants to demonstrate that they are complying with these rules.

But what this growing digital infrastructure demonstrates is that what may begin as child safety-focused efforts can quickly snowball into a more expansive data collection effort that may fall afoul of countries' existing privacy regimes. Those rules specifically require companies to only use people's data for the purposes initially outlined to those individuals. Say, to check their age before using a digital service. What those firms can not then do is use the same personal information for other purposes — without going back to people to get their separate consent.

Security experts, for instance, discovered that a verification provider for the likes of LinkedIn, Roblox and Chat-GPT also provided separate surveillance services for US and Canadian authorities. That included the ability to run almost 270 separate verification checks, including comparing facial recognition results against specific government watchlists. Persona, the company, denied it had used the verification data for government-related work. Though the cybersecurity researchers said there were few, if any, specific limitations if the firm decided to do so in the future.

The third friction is both philosophical and practical.

Under Western privacy standards, individuals have the right to access their data, delete it (where necessary), object to it being collected in the first place and correct it if the information is wrong. It's a basic democratic safeguard to empower people to control how their data is collected, stored and used.

Yet the child-focused age verification infrastructure, as described above, fundamentally breaks the direct connection between individuals and companies that collect this information. If I'm using, say, TikTok, that platform may then outsource its age verification/assurance work to a third-party contractor that remains anonymous to the end user (aka: me). It's hard to object about your data being collected if you don't know who to complain to in the first place.

This may sound too academic. But it has real-world implications.

IDMerit, a global identity verification company, with operations in more than a hundred countries was found to have left one billion records from 26 countries — including people's date of births, home addresses and personal ID numbers — unprotected in an online database. Because IDMerit (which focuses on the financial services industry) provided its verification services to other companies, few, if any, of the people involved were aware of the firm's existence — and therefore could not directly engage with the company over how it mishandled their data.

Extrapolate that data breach onto the emerging digital infrastructure around child online safety, and the gap between individuals' rights and unaccountable (leaking?) databases becomes even more pronounced.

What is described above is not theoretical. It is already underway — and pits legitimate privacy concerns against equally valid online child safety discussions. This is not a question of one policy topic taking precedent over another. We're living in gray zone somewhere in between.

But in the rush to protect children from digital threats, the impact on other valid policy areas is woefully missing. Privacy rights — including those involving children — are overridden by the policy incentive to "do something" around child online safety.

That is a political decision by elected officials. But we should acknowledge that it comes with significant downsides.

Chart of the week

THE US IS THE KNOCK-OUT global leader when it comes to AI. But wariness among its citizens about how the emerging technology will affect daily life has been creeping upward over the last five years.

Currently, 50 percent of those polled by the Pew Research Center said they were more concerned than excited about the increased use of AI in daily life. That compares to just 10 percent who were more excited and concerned.

THE AI THREAT TO THE MID-TERMS NO ONE IS TALKING ABOUT

WHEN AN AI DEEPFAKE VIDEO OF JAMES TALARICO, the Democratic Senate nominee for Texas, started doing the rounds on social media in March, alarm bells started to ring. In the one-minute video, the faked video pictured a life-like Talarico speaking directly to camera — repeating old social media posts that he had written, often years before. The post ran with a "AI generated" disclaimer. But it was small enough for most would-be voters to easily miss it.

The word "AI slop" has entered public conversation for a reason. Ahead of the US mid-term elections in November, concerns are high that the vote will be consumed with AI-generated attack ads and other social media posts that will make it almost impossible to discern fact from fiction. It does not help that Donald Trump continues to use AI to depict himself and his enemies.

But we are missing the true use case of artificial intelligence in the upcoming November election. And it should be engendering a lot more worry compared to the legitimate concern of the "AI-slopification" of the mid-terms.

The real election-related use case for AI is discerning how best to convince people to vote one way or another. It comes on the back of ongoing mass data collection of US citizens' personal data (often via commercial data brokers) and the increasing use of sophisticated AI systems in all aspects of daily life. Combined, these trends are likely to provide political consultants and politicians powerful levers to pull in what is already turning out to be one of the most divisive American elections in recent memory.

There are party political divides on how willing people are to use these AI-powered tools. A survey from the American Association of Political Consultants found that 64 percent of Republican consultants used AI in their daily work versus 49 percent of their Democratic counterparts. That's not a meaningful difference. But it does demonstrate Republicans' slight advantage in using the latest technology to reach would-be voters.

So what does this AI-fueled political advocacy actually look like?

One service called Resonate includes a dataset of thousands of data points pulled from millions of American citizens. It uses machine learning techniques to understand voter behavior, craft targeted social media messaging and predict how individuals may vote. Another, EyesOver, pulls in data from multiple social media sites and then uses AI to predict how that sentiment may affect specific political campaigns or activities.

Much of this is traditional political consultancy dressed up to look like shiny AI-powered wizardry. But it's undeniable that with large language models becoming exponentially more powerful, the ability for these services to gleam greater insight into voter behavior — fueled by vast amount of data on individual voters — is expanding rapidly.

The most novel use of AI in this year's US mid-term elections is something called "silicon sampling." Companies are rapidly using AI systems to simulate voter populations instead of using polling to identify people's electoral intentions. In one case, Aaru used census data to replicate voter districts and then created AI agents to act like voters. Another firm, MiroFish, relied on open-source AI models to forecast public opinion by equally simulating actual voters through the combination of multiple datasets.

On paper, this use case is more economic than traditional analogue polling. But the reliance on large language models to simulate voters' intentions has some serious downsides. At the top of that list is the inherent bias that AI simulations create due to the inequalities baked into the models upon which they rely. These AI systems are only as good as the data that builds them. All current next-generation models are still biased in ways that almost certainly under-represent minority groups. That makes it likely that such AI simulations will also undercount minority voters in whatever AI-powered polling is run via these firms' glitzy dashboards.

Much of this is the realm of political consultants, not would-be voters. And compared to the inner workings of AI-powered surveys and metrics, the visual nature of the threat posed by AI deepfakes can feel more urgent and tangible. But that does not mean this AI political wonkery is less of a threat.

Most AI-enabled divisive political content is flagged almost as soon as it is published. Such material is inevitably high-profile. The response to it is equally highly-public. That is not the case for most back-office political consultancy work that relies more and more on opaque, data-hungry AI systems which now drive how political campaigns interact with voters.

It is just not public enough to garner most people's attention. But the fact that such AI systems now form part of much of mid-term political campaigns should be a reminder that this emerging technology — like in much of our lives — is increasingly central to how political operations work.

What I'm reading

— The European Commission claimed that Meta's Instagram and Facebook failed to identify, assess and mitigate risks to minors under the EU's Digital Services Act. More here.

— New Mexico's Attorney General proposed a series of significant changes to Meta's business model and platform design as part of remedies proposed in a second trial against the social media giant that started on May 4. More here.

— A group of academics tested how social media and online streaming may be associated with varying levels of addictiveness. Across two survey groups, they did not find meaningful increases in addiction compared to the general population. More here.

— Researchers polled Australian teenagers about how the country's social media ban had affected them. They found that most were still on the platforms, but they would leave if their friends did too. More here.

— The European Parliament published an analysis on how Google's AI-powered snippets at the top of search queries affected publishers' revenue and overall media freedom. More here.

Member discussion